Azure, AI, and Analytics: Make Them Pay for Themselves

Why AI and analytics are under the microscope

Across mid-market and SMB organisations in the UAE, KSA, Qatar and Europe, the conversation about AI has shifted. Finance leaders no longer ask “What can AI do?” but “Where is the impact on P&L, and what does it cost us to run in Azure every month?” At the same time, cloud has become a highly visible operating expense, and boards expect to see it managed with the same discipline as any other major line item.

For data leaders running Azure estates in the 25k–100k USD per year range, this creates a double bind. On one side, business stakeholders want faster insights and smarter automation powered by AI; on the other, finance is pushing for tighter controls, clearer ROI, and fewer experiments that never make it into production. The result is that AI and analytics are under more scrutiny than ever before.

Surveys of global CFOs show a widening gap between AI ambition and AI execution. Many finance leaders say they feel accountable for ensuring AI investments deliver measurable value, but point to skills gaps, weak governance, and poor data quality as reasons why proof of ROI is still patchy. For Azure customers, this often shows up as stalled pilots, rising cloud bills, and a lack of credible before-and-after metrics.

Start with FinOps for data and AI

Before adding more AI use cases or new Azure services, mid-market organisations benefit from adopting a FinOps mindset. FinOps treats cloud as a variable cost base to be actively managed rather than a fixed utility bill, with engineering, product, and finance all sharing responsibility for the number at the bottom. For data and AI workloads, this is the fastest way to free up budget for innovation without asking for more money.

Three practices usually deliver quick wins. First, implement consistent tagging and cost allocation so every data product, model, and environment in Azure has an owner and a budget. Second, right-size and auto-scale compute for analytics and AI; many virtual machines and databases are provisioned for peak and then left oversized indefinitely. Third, use storage tiering and lifecycle policies so cold or archival data automatically moves from premium disks to lower-cost Azure storage tiers. Together, these steps reduce waste and create a clear cost model for each workload.

Pick fewer, higher-ROI AI use cases

In an environment where every dollar is scrutinised, broad AI experimentation without a strong value story is hard to defend. Mid-market CFOs are more likely to back a small number of projects that clearly reduce costs, improve cash flow, or protect revenue than a long list of pilots with vague benefits. That means data leaders must be selective about where they deploy scarce engineering and Azure budget.

The strongest candidates share a few traits. They address a specific, measurable pain point such as days sales outstanding, forecast accuracy, customer churn, or process throughput. They have short implementation cycles and limited integration complexity, so benefits are visible in the same budget year. And they can be expressed in simple unit economics, such as cost per invoice processed or cost per customer interaction, which can then be tracked as AI is introduced. Examples in this category include collections prioritisation, inventory optimisation, and frontline agent assistance.

Treat data quality and governance as cost levers

Data governance is often framed as a compliance or IT hygiene issue, but for this audience it is equally a cost and ROI lever. Poor data quality leads directly to rework in reporting, higher compute consumption from inefficient pipelines, and AI projects that never make it into production because the inputs cannot be trusted. Every failed initiative represents not only wasted Azure spend but also lost credibility with finance and the business.

Investment in data governance is growing rapidly across Europe and the Middle East, driven by regulations such as GDPR, national digital strategies, and programmes like Saudi Arabia’s Vision 2030. Organisations that implement consistent cataloguing, lineage, and policy enforcement across their Azure data estate find it easier to control duplication, standardise definitions, and respond to audits. That reduces operational overhead and creates the trust needed to embed AI into core processes.

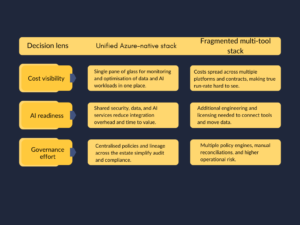

Simplify the Azure data and AI stack

Many mid-market Azure environments have grown organically: a data warehouse for one use case, a data lake for another, separate tools for BI, integration, and machine learning layered on top. Over time, this fragmentation raises costs through duplicated capabilities, additional data movement, and more complex governance. It also makes it harder for finance to understand what they are paying for and why.

A more sustainable direction is to gradually consolidate onto a smaller set of Azure-native and unified services, without forcing disruptive rewrites. The aim is not to chase every new offering, but to standardise on a reference stack that is well understood by both engineering and finance. That simplification improves cost predictability, speeds up future delivery, and reduces the risk surface.

For data leaders, this kind of consolidation also simplifies conversations with finance. When most new initiatives land on a standard Azure pattern with known unit costs, it becomes much easier to estimate the cost of a new AI use case and then compare forecast with actual once it is in production. That reduces surprises and builds confidence in the roadmap.

Speak in the CFO’s language

Even the best-run Azure estate and the most elegant AI models will struggle for funding if their impact cannot be described in financial terms. CFOs and finance teams think in unit economics, risk, and cash flow, not in clusters, parameters, or pipelines. Successful data leaders translate technical progress into metrics that fit neatly into this worldview.

Practically, that means reporting on measures such as cost per thousand invoices processed, cost per customer served, or cost per support ticket, and then showing how AI and analytics move those numbers. It also means linking initiatives to outcomes like reduced write-offs, faster cash collection, fewer manual hours, or lower compliance penalties. Global studies of AI adoption show that organisations which close the gap between ambition and execution do so by modernising data foundations, clarifying governance ownership, and shifting to performance-based metrics rather than one-off project milestones.

From cost centre to self-funding engine

For Azure-centric mid-market and SMB organisations in the UAE, KSA, Qatar and Europe, the opportunity is to turn AI and analytics from a cost centre into a self-funding engine. The organisations that succeed are not necessarily those with the most sophisticated models, but those that combine FinOps discipline, focused high-ROI use cases, and strong data governance on a simplified Azure stack. When every new initiative comes with a clear cost profile and a credible value story, finance stops seeing AI as an experiment and starts viewing it as a lever for growth.

The question, then, is no longer whether you can afford to invest in AI and analytics. It is whether you are managing your Azure, data, and governance foundations well enough for those investments to consistently pay for themselves.

[fluentform id=”6″]